There is no oracle

Being Socratic in the age of the Bullshit Machines

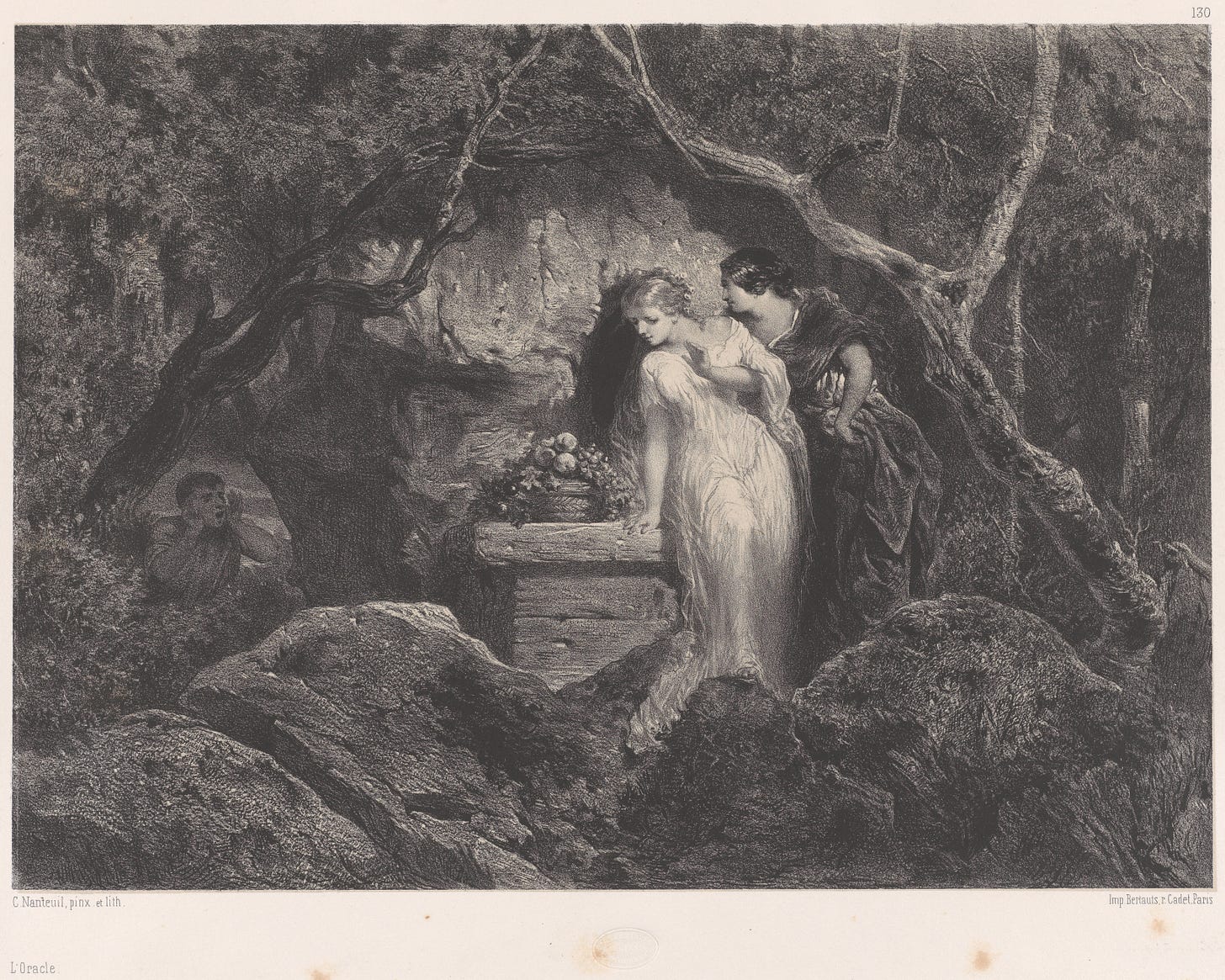

The legend of Socrates begins with a visit to the Delphic oracle, the Pythian priestess believed to speak on behalf of Apollo. Chaerephon, a friend to Socrates, visits the oracle and asks if anyone is wiser than Socrates. She tells him no; Socrates is the wisest.

For some, this would be an affirming message. A divine messenger, an oracle, has told you that you are the wisest man (perhaps in Athens, perhaps in the entire world). Socrates doesn’t take it this way, however. He’s troubled. At his trial, Socrates says:

When I heard of this reply I asked myself: "Whatever does the god mean? What is his riddle? I am very conscious that I am not wise at all; what then does he mean by saying that I am the wisest? For surely he does not lie; it is not legitimate for him to do so." For a long time I was at a loss as to his meaning; then I very reluctantly turned to some such investigation as this: I went to one of those reputed wise, thinking that there, if anywhere, I could refute the oracle and say to it: "This man is wiser than I, but you said I was."

The rest of the story is familiar to anyone who has read Plato’s dialogues. Socrates develops a reputation for trouble-making, as he pokes and prods at the allegedly wise. He regularly confronts men who think themselves wise, often exposing their inconsistencies.

By the way, if you want to read some Platonic dialogues, the Republic is a good place to start. We’re reading it together starting at the end of March.

Socrates’ story begins with a refusal to defer to an oracle. He refuses to take the easy way out, to stop asking the big questions. In more modern terms: he owns it. He says ‘I will continue to question, even the words of the gods themselves.’

Read this way, there might be something to the charge of impiety. Socrates is refusing to take Apollo, or at least his mouthpiece, at his word. I could see how a zealous Greek could believe that this was impious.

I also believe that it was the right thing to do.

I’ve noticed a new online trend. It’s a troubling one. When two people get into a debate online, very quickly one of the interlocutors will refer to AI to get a quick answer and settle the dispute. ‘Well, the AI says…,’ they’ll say. If you refuse to accept the answer, then you’re being, well, rather impious. The oracle has spoken; you should believe it.

Of course, this is a horrible strategy if you are at all interested in the truth. Generative AI is impressive and helpful in some contexts, but when it comes to fact-checking, these systems are Bullshit Machines.1 They generate things that sound good and meaningful, but they have little to no attachment to the world.

Sometimes, relying on generative systems for fact-checking has hilarious, maybe disastrous, consequences. During the dust-up about Joe Biden’s pardon of his son Hunter (an egregious example of nepotism, giving a relative a blanket ten-year pardon), a CNN commentator sought to defend him by appealing to historical precedents. She posted a screenshot from a conversation with ChatGPT; that conversation listed several examples of presidents pardoning their close relations. The problem? It was bullshit. The AI made it up, and the commentator couldn’t be bothered to double-check anything.

Esquire made a similar mistake, claiming George H.W. Bush pardoned his son Neil. The problem? Neil Bush was not given a pardon by his father. That article is now retracted, with no evidence of what the mistake was or how Esquire’s editorial system allowed such a tremendous error.

In the case of CNN’s commentator, we know she used ChatGPT. As she put it when challenged: ‘Take it up with ChatGPT.’ Esquire never confirmed this was an instance of using AI, but it looks suspiciously like it. A complete historical fabrication that seems to fit all too perfectly into the writer’s narrative? That’s just the job for a Bullshit Machine.

Now, this cognitive offloading (without regard for bullshit) is everywhere. On X, users regularly ask ‘Grok, is this true?’ as a means of fact-checking their enemies. Pay no mind to the fact that Grok is just as unreliable as all of these systems. At least it sounds good.

The technology is new, more powerful than ever, and growing in influence, but the problem is nothing new. We have long wanted someone or something to tell us all the answers. We consult oracles of all kinds: Delphic priestesses, chicken bones, tea leaves, Tarot cards, internet encyclopedias, and fast-talking gurus. For millennia, we have cried out desperately for someone to relieve us of the responsibility of inquiry.

But like Socrates, we should refuse this temptation. Each of us should say, ‘I am a human being, in possession of rational faculties, and I will use them to their fullest extent.’ And we should say no to the Bullshit Machines which tell us that they can handle that for us. They can’t, and we shouldn’t want them to, anyway.

See ‘ChatGPT is Bullshit’ by Hicks, Humphries, and Slater

This is exactly what my Socratic machine was telling me.

While agreeing full-throatedly that we should all say, ‘I am a human being, in possession of rational faculties, and I will use them to their fullest extent,’ and while admitting up front that I work in the AI industry, adjacent to but not directly on today's frontier models, I think takes like this and the "ChatGPT is bullshit" paper will not age well, and are instances of imagining the world is a certain way we want it to be rather than the way it is.

Are the mistakes listed above genuine? Yes! Should the authors have checked their sources? Yes! But the argument boils down to "Chatbots sometimes do this, therefore they are fallible, therefore we should ignore them." I have bad news for anyone considering accepting this argument, which is that humans also do this sometimes, both accidentally and on purpose.

The "ChatGPT is bullshit" makes additional, outdated arguments based on the premise that the models are designed to "produce likely text" rather than true and helpful text. This is becoming less true over time; the "produce likely text" stage is now referred to as "pretraining", and there's a huge and expensive step where the model builders attempt, of course not entirely successfully, to make these things honest and helpful.

Practically, we've reached the point where I find the models deeply useful. I ask it for creative help with a recipe. Sure, there's an example on the internet where a weak outdated model said glue was a pizza topping, but the actual result today from frontier models is I get useful advice that makes my food better. I discuss movie plots with the models and feel that i end up understanding the movie better, seeing multiple perspectives. I ask it to help me with hard math questions --- sometimes its answer is wrong, but in its attempt I see the technique I should have used and learn the truth.

Philosophically, I think there are deep issues here, and it's better to avoid glib dismissals, especially because the underlying story is changing rapidly. We of course shouldn't think of these things as infallible oracles and give up our agency, but I'm rapidly coming to view them as essential cognitive prostheses that make me smarter and more productive and even more virtuous. Something big is happening.